There have been reports of another high profile website, launched with performance and availability problems - the obamacare website e.g. http://news.yahoo.com/analysis-experts-architecture-obamacare-website-15....

It seems surprising in this day of virtualised infrastructure, quite sophisticated frameworks and testing environments. As developers we do commonly see websites or infrastructure that are just creaking along or just 'thrown out there'. This is surprising with modern technology but does seem to happen often. Obamacare is a big highly visible project which one would expect to be well funded and well organised. Funding and organisation are in my experience the main risks as there are few things performance wise that technology can't handle given planning and resourcse. We have sen a lets just do something, we can't really afford to test it and the project where it was hard enough to get functionality agreed on, let along get it built. More often the 'lofty ambitions' problem that staged releases tries to address, but also do it fast and cheap - even for big companies.

In taking a look at some of the commented on deficiencies of the site it does match our experience of 'crash-through' projects, by the end of which everybody is burnt out. Given the resources, I think that website builders would really like to see smoke coming out of computers instead - it is quite interesting to see what they are capable of and how to break them.

Taking a look at ObamaCare and the analysis.

Website Performance

Technically there are some strange statements in this analysis, e.g.:

"For instance, when a user tries to create an account on HealthCare.gov, which serves insurance exchanges in 36 states, it prompts the computer to load an unusually large amount of files and software, overwhelming the browser, experts said."

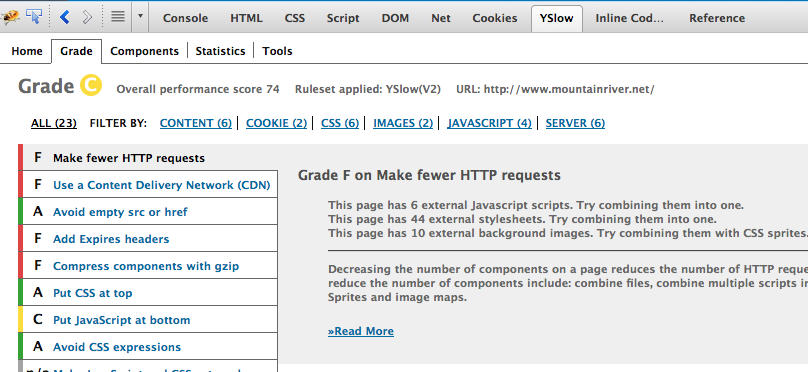

This is strange because it seems to reference problems with some very basic performance tuning. For example there are limits related to the Internet Explorer web browser in the number of CSS files that are loaded. This sometimes comes up when we are testing sites as the following will show, but in a production stie it is quite strange.

To start with this is a functionality issue and quite easy to test for. Running tests from within browser inspection tools such as firefox developer tools - shows the problem on this site - 44 external stylesheets.

In the old days we used to have to concatenate our css together and run javascript through minifiers by hand. Not hugely onerous, but all the frameworks that I have used recently have it built in, including: Drupal, Grails, Joomla!, Wordprss, Expression Engine.

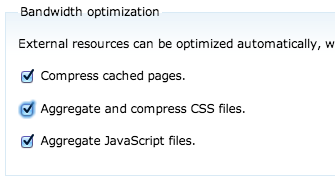

In Grails the resources plugin just does resources optimisation and in drupal we have a setting screen to turn optimisation on and off.

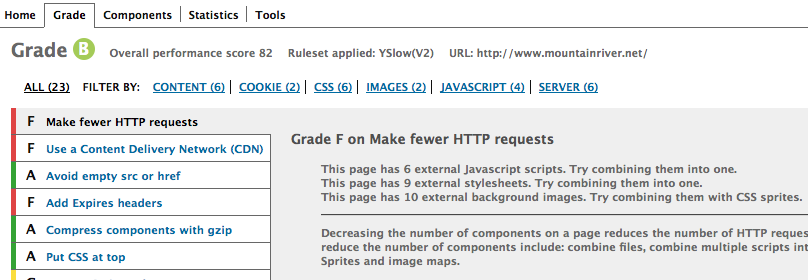

Now there are fewer CSS files in particular - 9 instead of 44.

We could go further with half a days effort but that shows how simple it is to use the frameworks optimisation.

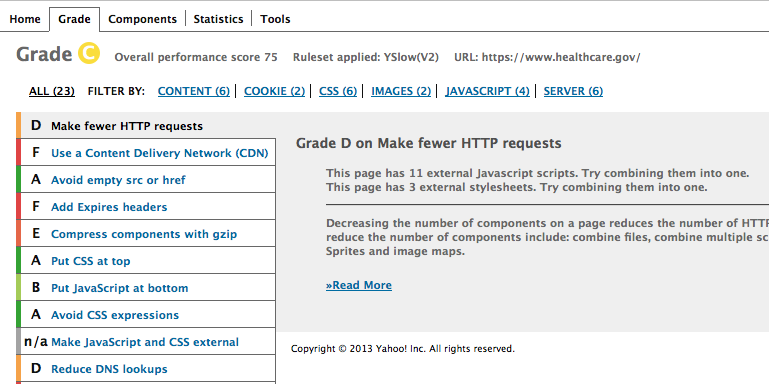

Switching between optimised and not optimised css is useful - while we are inspecting our CSS and javascript we can see the code as it is supposed to be in the browser and on the live site we toggle a button and the files are optimised. Sure internet explorer does still hurt us as with the way themes and css frameworks are going there are lots of files and we can't actually view the site in development mode in internet explorer - development processess being a whole other story. Maybe the Obamacare developers were just trying to debug the site when boffins were doing their analysis? Currently it is polished - as one would expect with so much focus on it:

Infrastructure Performance - What about the Cloud?

Cloud in this case just means virtualised and scalable something. There are technologies such as GAE and Amazon hosting that harness the power of Google's and Amazon's infrastructure respectively. Hosting companies on virtualised hardware commonly provide the ability to cluster computers together to provide increased computing power.

This should mean that as load to the server increased more resources are able to be brought online without anybody noticing. There are a number of application, privacy, and operational things to consider. However, Acquia is providing hosting for NSW government based on Amazon and we have used Heroku for large companies providing a development operations workflow to Amazon. At other organisations we have used cluster technology and would be happy with clustering the RimuHosting VPS that we usually use. Other organisations we have worked with use the Akamai cache that takes the load from any static files off the system.

So why isn't Obamacare able to scale out infrastructure like this? Sometimes highly transactional and secure sites which I expect Obamacare is, can be more complicated. There are sophisticated session migrating and some quite amazing database synchronisation tools, so why is a big question. It could be testing?

Testing

"Government officials blame the persistent glitches on an overwhelming crush of users - 8.6 million unique visitors by Friday - trying to visit the HealthCare.gov website this week."

This either comes down to a process or budget issue. Testing wasn't 'built into the process' or releases of the software were off the rails enough so that a load testing window on built up infrastructure was just skipped. Perhaps infrastructure resources to thrash out servers at expected rates of access, 10 times above and 100 times above were just not provided. With virtualised hosting this is far easier today than in the past. There is good testing software that enables sites to be 'ramped up in load' and run a realistic simulation. Testing most certainly would have enabled a determination of the expected behaviour (hopefully linear scaling) of the system under various load levels and made possible the development of contingency plans.

Conclusion

With all this technology and demonstrable capability of sites like google to scale out why are there so many sites that are launched that create press for all the wrong reasons in their first week? In retail sites having an overloaded site could be good press, but it is still lost sales. Wow our site handled $x million transactions/dollars etc would also work. For government sites it clearly just hinders the program and is an emabarrasment. Plan for success - lots of visitors to the site - and test.

http://www.infoq.com/news/2013/11/Healthcare.gov-Perf-Analysis